浙江农业学报 ›› 2026, Vol. 38 ›› Issue (2): 383-396.DOI: 10.3969/j.issn.1004-1524.20250100

轻量化改进的苹果园果实识别模型CS_YOLOv7

武汉轻工大学 数学与计算机学院 湖北 武汉 430048

-

收稿日期:2025-02-10出版日期:2026-02-25发布日期:2026-03-24 -

作者简介:欧阳宇,研究方向为计算机视觉、目标检测。E-mail:528038794@qq.com -

通讯作者:*刘朔,E-mail:874477154@qq.com -

基金资助:国家自然科学基金民航联合研究基金(U1833119);湖北省重点研发计划(2023BBB046)

Lightweight and improved apple orchard fruit recognition model CS_YOLOv7

OUYANG Yu( ), LIU Shuo(

), LIU Shuo( ), LI Mengmin, ZHANG Peng

), LI Mengmin, ZHANG Peng

School of Mathematics & Computer Science ,Wuhan Polytechnic University Wuhan 430048, China

-

Received:2025-02-10Published:2026-02-25Online:2026-03-24

摘要:

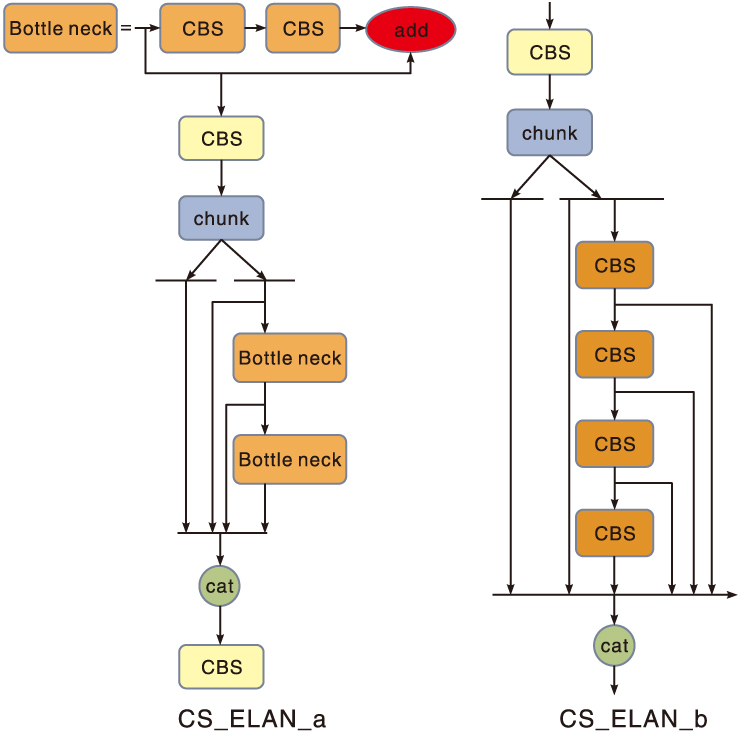

针对当前苹果园果实识别面临的模型参数规模庞大、计算资源消耗量过多、模型检测精度和检测速度难以实现良好平衡的问题,提出一种基于YOLOv7改进的轻量化模型CS_YOLOv7。首先,引入通道分离的高效层注意网络(CS_ELAN)和快速空间金字塔池化(SPPF)模块,实现模型整体轻量化;其次,采用K-means++算法生成适合本研究数据集的新先验框,增强模型定位目标的能力;然后,改用Wise-IoU损失函数代替原损失函数,减少低质量示例的有害梯度,提升模型收敛速度和目标识别的定位能力;最后,通过添加一种基于空间和通道维度的注意力机制SE_CBAM,使模型在更全局角度下提取苹果小目标的关键特征。结果表明:相较于原YOLOv7模型,改进模型的平均精确率(交并比为0.5,mAP@0.5)提升了1.7百分点,模型大小减少22.3 MB,检测速度提升118.9 frame·s-1,模型参数量和计算量分别减少31.8%和16.1%。CS_YOLOv7模型在优化准确度的同时实现了多方位的轻量化,可应用于果园幼果数据集目标的快速识别,为今后高效的实时目标识别和后续的机器采摘奠定基础。

中图分类号:

引用本文

欧阳宇, 刘朔, 李萌民, 张鹏. 轻量化改进的苹果园果实识别模型CS_YOLOv7[J]. 浙江农业学报, 2026, 38(2): 383-396.

OUYANG Yu, LIU Shuo, LI Mengmin, ZHANG Peng. Lightweight and improved apple orchard fruit recognition model CS_YOLOv7[J]. Acta Agriculturae Zhejiangensis, 2026, 38(2): 383-396.

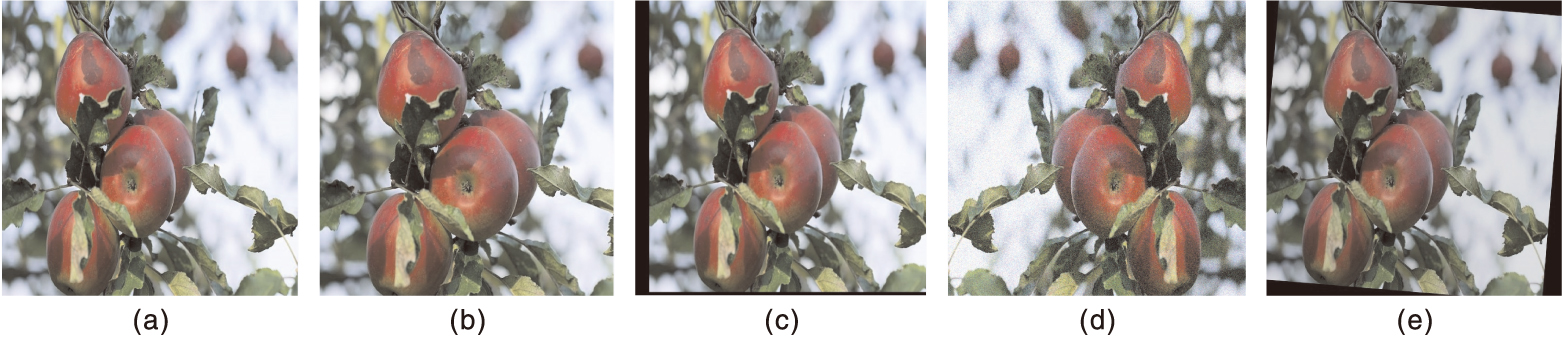

图1 数据集增强示例 a,原图;b,随机裁剪;c,随机平移;d,随机翻转加高斯噪声;e,随机旋转加随机亮度。

Fig.1 Examples of dataset augmentation a, Original image; b, Random cropping; c, Random translation; d, Random flipping plus Gaussian noise; e, Random rotation plus random brightness.

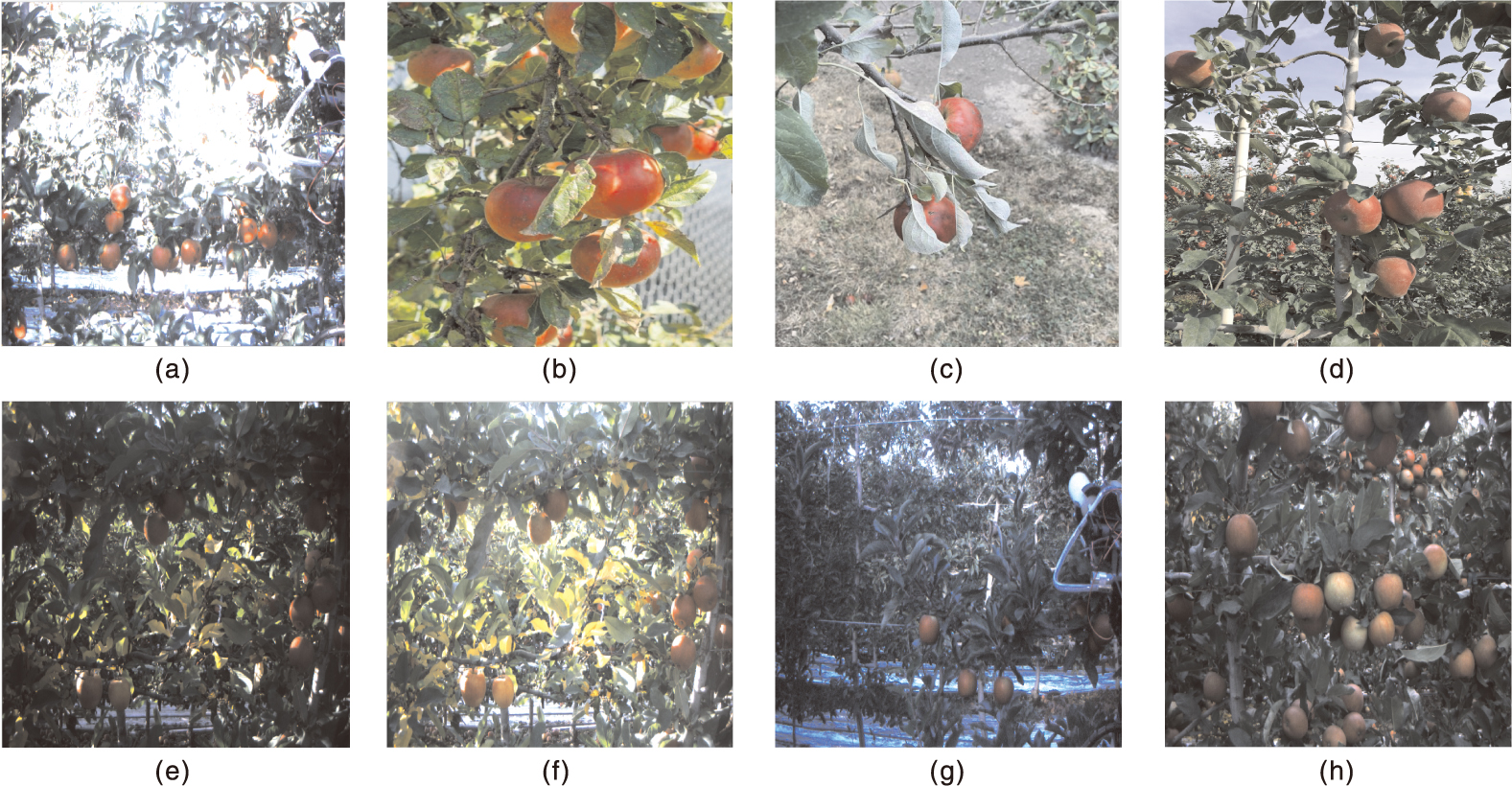

图2 不同条件下的图像示例 a,逆光;b,顺光;c,果叶遮挡;d,灯杆遮挡;e,清晨初阳场景;f,中午盛阳场景;g,果实稀少场景;h,果实密集场景。

Fig.2 Examples of images under different conditions a, Backlighting; b, Frontlighting; c, Occlusion by fruit leaves; d, Occlusion by lampposts; e, Early morning sunrise scene; f, Midday strong sunlight scene; g, Sparse fruit scene; h, Dense fruit scene.

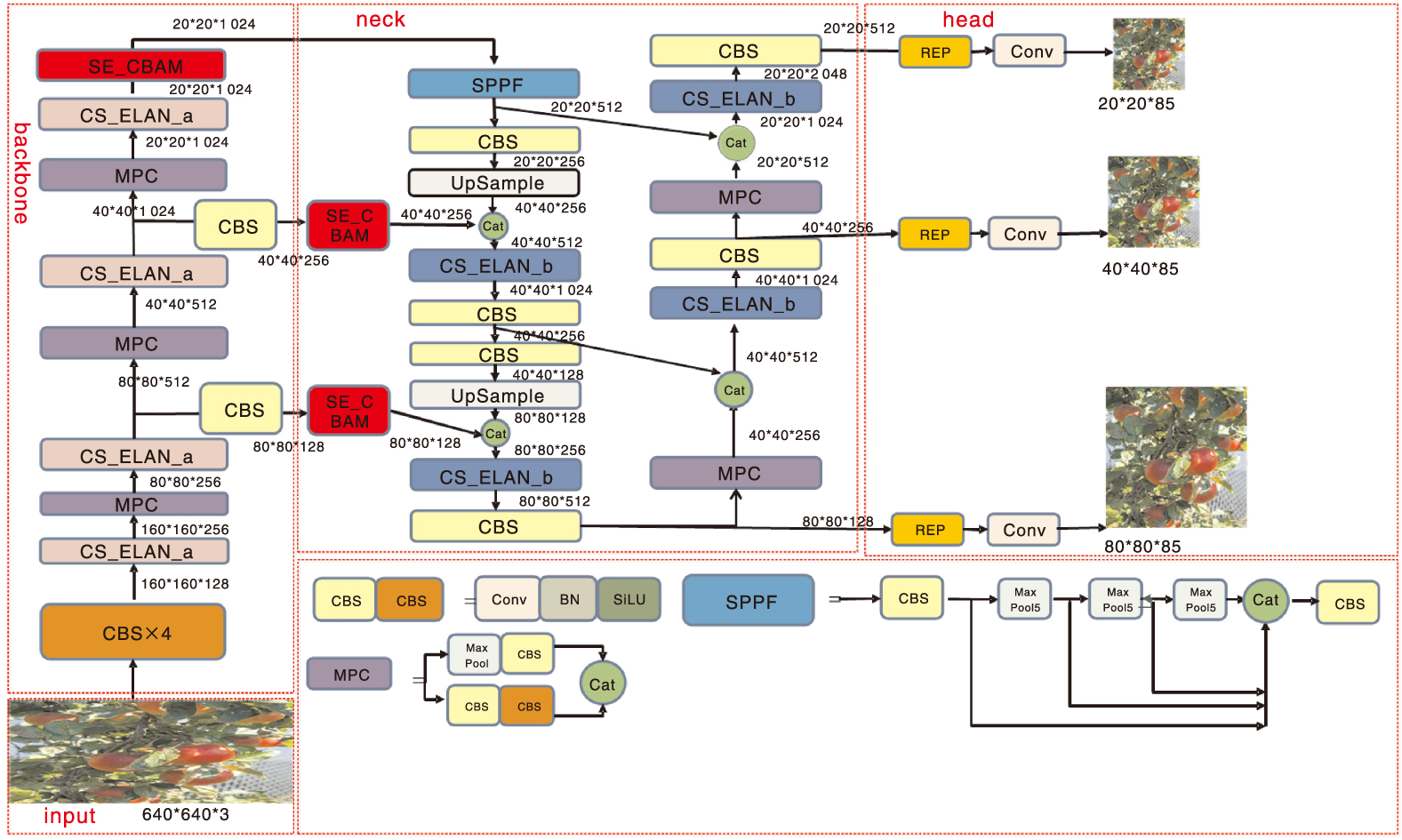

图3 CS_YOLOv7网络模型的整体结构 input,输入;backbone,骨干;neck,颈部;head,头部;cat,拼接;UpSample,上采样;Conv,卷积;MaxPool,最大池化;BN,批标准化;SiLU,激活函数;REP,重参数化模块;SE_CBAM,通道与空间注意力机制。下同。

Fig.3 Overall structure of CS-YOLOv7 network model cat, Concat; Conv, Convolution; MaxPool, Max pooling; BN, Batch normalization; SiLU, Sigmoid linear unit; REP, Re-parameterized convolution; SE_CBAM, Squeeze-and-excitation_convolutional block attention module. The same as below.

| 检测层大小 Detection layer size | 先验框尺寸Anchor box size | |

|---|---|---|

| 调整前Before adjustment | 调整后After adjustment | |

| 80×80 | [12, 16], [19, 36], [40, 28] | [9, 12], [15, 29], [32, 23] |

| 40×40 | [36, 75], [76, 55], [72, 146] | [29, 60], [63, 44], [59, 119] |

| 20×20 | [142, 110], [192, 243], [459, 401] | [171, 132], [231, 292], [550, 483] |

表1 先验框重聚类

Table 1 Reunion of anchor box

| 检测层大小 Detection layer size | 先验框尺寸Anchor box size | |

|---|---|---|

| 调整前Before adjustment | 调整后After adjustment | |

| 80×80 | [12, 16], [19, 36], [40, 28] | [9, 12], [15, 29], [32, 23] |

| 40×40 | [36, 75], [76, 55], [72, 146] | [29, 60], [63, 44], [59, 119] |

| 20×20 | [142, 110], [192, 243], [459, 401] | [171, 132], [231, 292], [550, 483] |

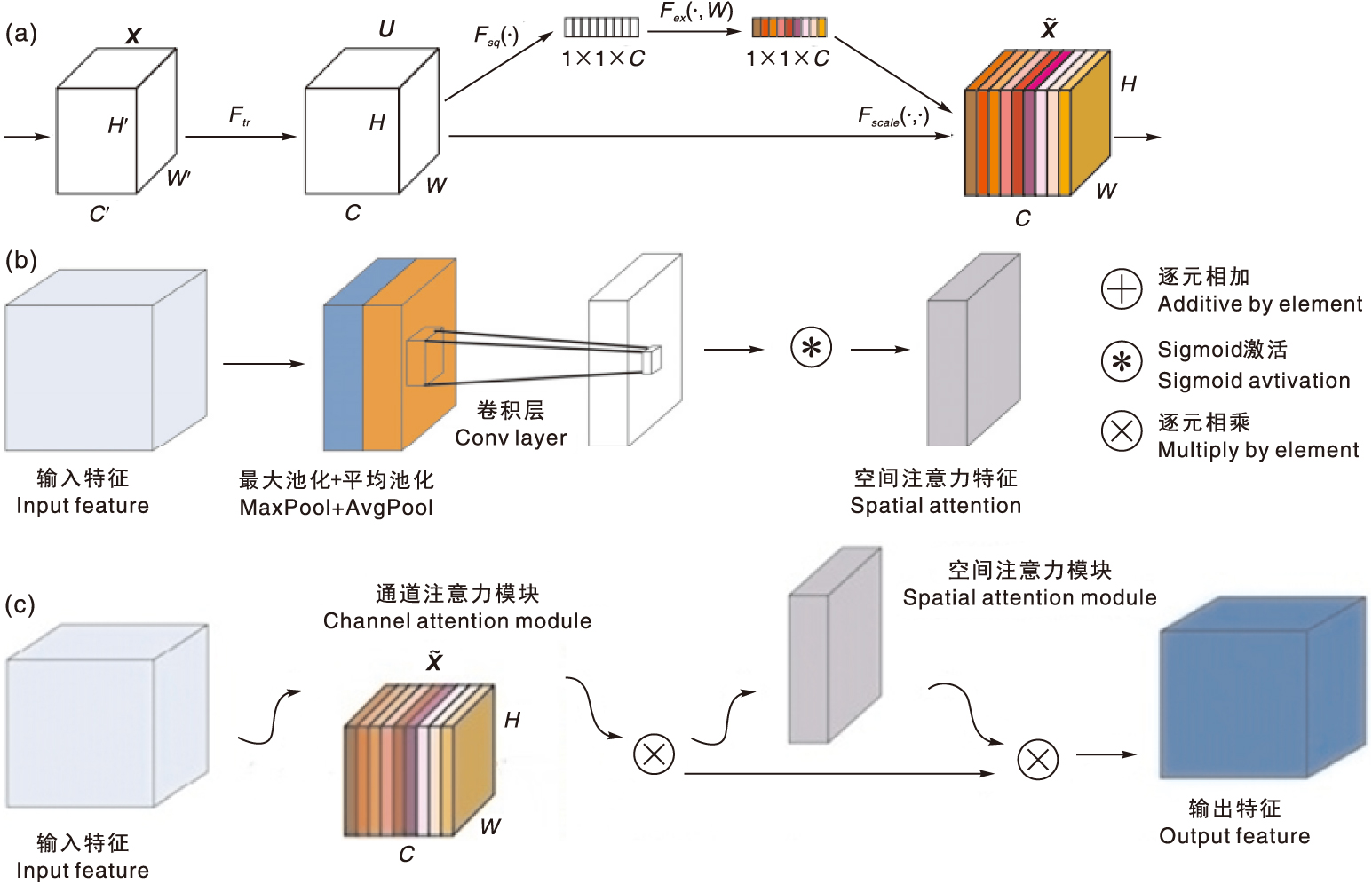

图5 SE_CBAM模块的结构 a,SE通道注意力模块;b,空间注意力模块;c,卷积注意力模块。

Fig.5 Structure of SE_CBAM module a, Squeeze-and-excitation channel attention module; b, Spatial attention module; c, Convolutional block attention module.

| 模型Model | P | R | mAP@0.5 |

|---|---|---|---|

| Y | 73.5 | 67.4 | 73.4 |

| G | 73.5 | 69.3 | 74.5 |

表2 先验框改进前后的性能对比

Table 2 Comparison of performance before and after the prior box reunion

| 模型Model | P | R | mAP@0.5 |

|---|---|---|---|

| Y | 73.5 | 67.4 | 73.4 |

| G | 73.5 | 69.3 | 74.5 |

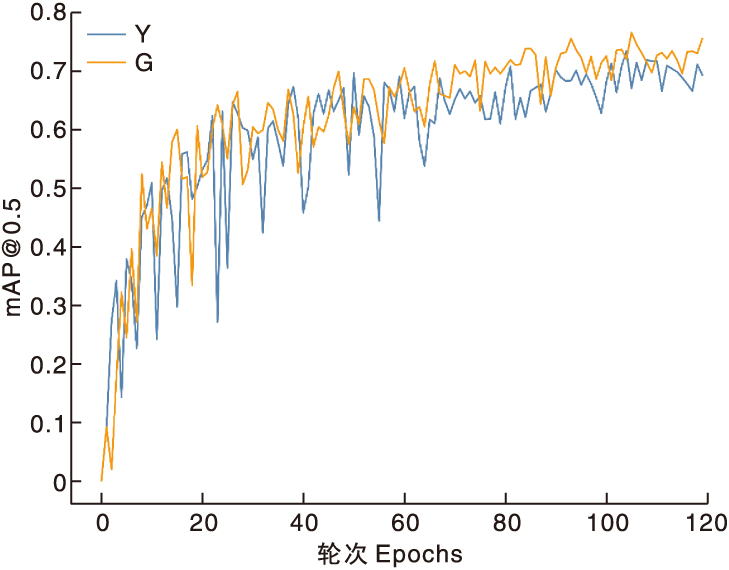

图6 先验框改进前后的迭代曲线对比 mAP@0.5,平均精确率[交并比(IoU)为0.5时]。下同。Y代表原先验框,G代表改进的先验框。

Fig.6 Comparison of iteration curves before and after the prior box reunion mAP@0.5,Mean average precision under intersection over union (IoU) of 0.5. The same as below. Y represents the model with the original anchor boxes, and G represents the model with the improved anchor boxes.

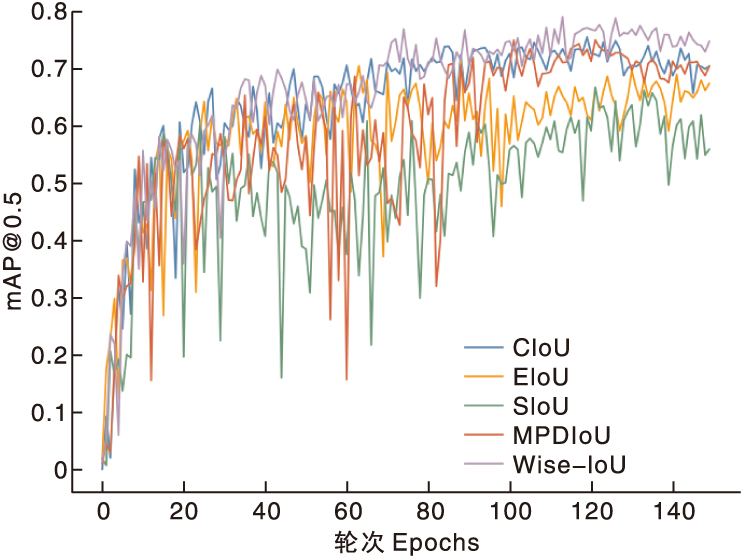

| 损失函数 Loss function | P/% | R/% | mAP@0.5/% | v/(frame·s-1) |

|---|---|---|---|---|

| CIoU | 73.5 | 69.3 | 74.5 | 106.3 |

| EIoU | 67.3 | 67.3 | 70.2 | 166.6 |

| SIoU | 68.3 | 59.3 | 65.0 | 169.4 |

| MPDIoU | 79.2 | 63.9 | 74.5 | 147.0 |

| Wise-IoU | 80.8 | 68.7 | 79.1 | 161.3 |

表3 不同损失函数下模型的性能对比

Table 3 Comparison of model performance under different loss functions

| 损失函数 Loss function | P/% | R/% | mAP@0.5/% | v/(frame·s-1) |

|---|---|---|---|---|

| CIoU | 73.5 | 69.3 | 74.5 | 106.3 |

| EIoU | 67.3 | 67.3 | 70.2 | 166.6 |

| SIoU | 68.3 | 59.3 | 65.0 | 169.4 |

| MPDIoU | 79.2 | 63.9 | 74.5 | 147.0 |

| Wise-IoU | 80.8 | 68.7 | 79.1 | 161.3 |

| 编号 No. | 改进情况Improvement status | N | v/ (frame·s-1) | mAP@0.5/% | |||

|---|---|---|---|---|---|---|---|

| CS_ELAN+SPPF | K-means++ | Wise-IoU | SE_CBAM | ||||

| 1 | × | × | × | × | 37 196 556 | 32.6 | 78.3 |

| 2 | √ | × | × | × | 25 226 060 | 166.6 | 73.4 |

| 3 | √ | √ | × | × | 25 226 060 | 106.3 | 74.5 |

| 4 | √ | × | √ | × | 25 226 060 | 166.6 | 71.3 |

| 5 | √ | √ | √ | × | 25 226 060 | 161.3 | 79.1 |

| 6 | √ | √ | √ | √ | 25 367 426 | 151.5 | 80.0 |

表4 消融实验的结果

Table 4 Results of ablation experiments

| 编号 No. | 改进情况Improvement status | N | v/ (frame·s-1) | mAP@0.5/% | |||

|---|---|---|---|---|---|---|---|

| CS_ELAN+SPPF | K-means++ | Wise-IoU | SE_CBAM | ||||

| 1 | × | × | × | × | 37 196 556 | 32.6 | 78.3 |

| 2 | √ | × | × | × | 25 226 060 | 166.6 | 73.4 |

| 3 | √ | √ | × | × | 25 226 060 | 106.3 | 74.5 |

| 4 | √ | × | √ | × | 25 226 060 | 166.6 | 71.3 |

| 5 | √ | √ | √ | × | 25 226 060 | 161.3 | 79.1 |

| 6 | √ | √ | √ | √ | 25 367 426 | 151.5 | 80.0 |

| 模型 | N | FLOPS/109 | v/(frame·s-1) | mAP@0.5/% | S/MB |

|---|---|---|---|---|---|

| SSD | 24 386 000 | 87.5 | 82.3 | 65.2 | 100.0 |

| Faster R-CNN | 41 753 000 | 83.8 | 55.0 | 65.7 | 314.0 |

| RetinaNet | 32 201 069 | 127.2 | 50.0 | 76.1 | 245.0 |

| YOLOv4 | 63 937 686 | 170.0 | 70.0 | 71.6 | 244.0 |

| YOLOv5x | 87 244 374 | 217.0 | 51.0 | 81.3 | 1 000.0 |

| YOLOv7 | 37 196 556 | 105.0 | 32.6 | 78.3 | 71.0 |

| YOLOv8 | 25 902 640 | 79.3 | 101.9 | 78.4 | 49.6 |

| YOLOv9 | 25 590 912 | 104.0 | 79.2 | 79.5 | 49.2 |

| Tiny-YOLO | 6 014 988 | 13.2 | 588.2 | 72.7 | 6.3 |

| MobileNet-YOLO | 24 616 556 | 41.3 | 161.0 | 78.1 | 49.7 |

| YOLOv12 | 26 454 880 | 89.7 | 107.5 | 80.2 | 53.5 |

| CS_YOLOv7 | 25 367 426 | 88.1 | 151.5 | 80.0 | 48.7 |

表5 不同模型的目标检测效果对比

Table 5 Comparison of target detection effects of different models

| 模型 | N | FLOPS/109 | v/(frame·s-1) | mAP@0.5/% | S/MB |

|---|---|---|---|---|---|

| SSD | 24 386 000 | 87.5 | 82.3 | 65.2 | 100.0 |

| Faster R-CNN | 41 753 000 | 83.8 | 55.0 | 65.7 | 314.0 |

| RetinaNet | 32 201 069 | 127.2 | 50.0 | 76.1 | 245.0 |

| YOLOv4 | 63 937 686 | 170.0 | 70.0 | 71.6 | 244.0 |

| YOLOv5x | 87 244 374 | 217.0 | 51.0 | 81.3 | 1 000.0 |

| YOLOv7 | 37 196 556 | 105.0 | 32.6 | 78.3 | 71.0 |

| YOLOv8 | 25 902 640 | 79.3 | 101.9 | 78.4 | 49.6 |

| YOLOv9 | 25 590 912 | 104.0 | 79.2 | 79.5 | 49.2 |

| Tiny-YOLO | 6 014 988 | 13.2 | 588.2 | 72.7 | 6.3 |

| MobileNet-YOLO | 24 616 556 | 41.3 | 161.0 | 78.1 | 49.7 |

| YOLOv12 | 26 454 880 | 89.7 | 107.5 | 80.2 | 53.5 |

| CS_YOLOv7 | 25 367 426 | 88.1 | 151.5 | 80.0 | 48.7 |

图8 不同光照条件下各模型对苹果幼果的识别效果 图中紫色圆圈框为漏检或错检的苹果幼果,清晨初阳和中午盛阳是同一地点果树在不同时间段拍摄的照片。

Fig.8 Identification effect of young apple fruit under different lighting conditions by different models The purple circles in the picture indicate missed or false detections of young apple fruits. The scenes “Early morning sunrise” and “Midday strong sunlight” were photographed at the same location of the fruit tree at different times.

| 数据集 Dataset | 模型 Model | P | R | mAP@0.5 |

|---|---|---|---|---|

| 子集1 | YOLOv7 | 76.0 | 78.0 | 74.0 |

| Subset | MobileNet-YOLO | 84.6 | 73.4 | 70.0 |

| YOLOv12 | 96.0 | 61.3 | 77.4 | |

| CS_YOLOv7 | 78.6 | 76.7 | 77.8 | |

| 子集2 | YOLOv7 | 85.4 | 71.4 | 67.8 |

| Subset 2 | MobileNet-YOLO | 92.0 | 69.1 | 69.2 |

| YOLOv12 | 89.5 | 60.4 | 76.9 | |

| CS_YOLOv7 | 82.7 | 77.7 | 74.1 |

表6 各模型在不同数据集上的检测效果对比

Table 6 Comparison of detection effects on different datasets by different models

| 数据集 Dataset | 模型 Model | P | R | mAP@0.5 |

|---|---|---|---|---|

| 子集1 | YOLOv7 | 76.0 | 78.0 | 74.0 |

| Subset | MobileNet-YOLO | 84.6 | 73.4 | 70.0 |

| YOLOv12 | 96.0 | 61.3 | 77.4 | |

| CS_YOLOv7 | 78.6 | 76.7 | 77.8 | |

| 子集2 | YOLOv7 | 85.4 | 71.4 | 67.8 |

| Subset 2 | MobileNet-YOLO | 92.0 | 69.1 | 69.2 |

| YOLOv12 | 89.5 | 60.4 | 76.9 | |

| CS_YOLOv7 | 82.7 | 77.7 | 74.1 |

| [1] | 李寒, 陶涵虓, 崔立昊, 等. 基于SOM-K-means算法的番茄果实识别与定位方法[J]. 农业机械学报, 2021, 52(1): 23-29. |

| LI H, TAO H X, CUI L H, et al. Recognition and localization method of tomato based on SOM-K-means algorithm[J]. Transactions of the Chinese Society for Agricultural Machinery, 2021, 52(1): 23-29. | |

| [2] | 张平川, 胡彦军, 张烨, 等. 基于改进版Faster-RCNN的复杂背景下桃树黄叶病识别研究[J]. 中国农机化学报, 2024, 45(3): 219-225. |

| ZHANG P C, HU Y J, ZHANG Y, et al. Recognition of peach tree yellow leaf disease under complex background based on improved Faster-RCNN[J]. Journal of Chinese Agricultural Mechanization, 2024, 45(3): 219-225. | |

| [3] | 石展鲲, 杨风, 韩建宁, 等. 基于Faster-RCNN的自然环境下苹果识别[J]. 计算机与现代化, 2023(2): 62-65. |

| SHI Z K, YANG F, HAN J N, et al. Apples recognition in natural environment based on Faster-RCNN[J]. Computer and Modernization, 2023(2): 62-65. | |

| [4] | 司永胜, 孔德浩, 王克俭, 等. 基于CRV-YOLO的苹果中心花和边花识别方法[J]. 农业机械学报, 2024, 55(2): 278-286. |

| SI Y S, KONG D H, WANG K J, et al. Recognition of apple king flower and side flower based on CRV-YOLO[J]. Transactions of the Chinese Society for Agricultural Machinery, 2024, 55(2): 278-286. | |

| [5] | 朱琦, 周德强, 盛卫锋, 等. 基于DSCS-YOLO的苹果表面缺陷检测方法[J]. 南京农业大学学报, 2024, 47(3): 592-601. |

| ZHU Q, ZHOU D Q, SHENG W F, et al. Apple surface defect detection method based on DSCS-YOLO[J]. Journal of Nanjing Agricultural University, 2024, 47(3): 592-601. | |

| [6] | 杜娟, 崔少华, 晋美娟, 等. 改进YOLOv7的复杂道路场景目标检测算法[J]. 计算机工程与应用, 2024, 60(1): 96-103. |

| DU J, CUI S H, JIN M J, et al. Improved complex road scene object detection algorithm of YOLOv7[J]. Computer Engineering and Applications, 2024, 60(1): 96-103. | |

| [7] | 宋怀波, 马宝玲, 尚钰莹, 等. 基于YOLOv7-ECA模型的苹果幼果检测[J]. 农业机械学报, 2023, 54(6): 233-242. |

| SONG H B, MA B L, SHANG Y Y, et al. Detection of young apple fruits based on YOLOv7-ECA model[J]. Transactions of the Chinese Society of Agricultural Machinery, 2023, 54(6): 233-242. | |

| [8] | 洪孔林, 吴明晖, 高博, 等. 基于改进YOLOv7-tiny的茶叶嫩芽分级识别方法[J]. 茶叶科学, 2024, 44(1): 62-74. |

| HONG K L, WU M H, GAO B, et al. A grading identification method for tea buds based on improved YOLOv7-tiny[J]. Journal of Tea Science, 2024, 44(1): 62-74. | |

| [9] | WANG C Y, BOCHKOVSKIY A, LIAO H Y M. YOLOv7: trainable bag-of-freebies sets new state-of-the-art for real-time object detectors[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). June 17-24, 2023, Vancouver, BC, Canada. IEEE, 2023: 7464-7475. |

| [10] | LIN T Y, DOLLÁR P, GIRSHICK R, et al. Feature pyramid networks for object detection[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). July 21-26, 2017, Honolulu, HI, USA. IEEE, 2017: 936-944. |

| [11] | LIU S, QI L, QIN H F, et al. Path aggregation network for instance segmentation[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. June 18-23, 2018. Salt Lake City, UT. IEEE, 2018: 8759-8768. |

| [12] | VARGHESE R, M S. YOLOv8: a novel object detection algorithm with enhanced performance and robustness[C]//2024 International Conference on Advances in Data Engineering and Intelligent Computing Systems (ADICS). April 18-19, 2024, Chennai, India. IEEE, 2024: 1-6. |

| [13] | CHANDGUDE P, BHAGWAT A, AUTADE M. A novel approach for k-means++approximation using Hadoop[J]. International Journal of Scientific and Research Publications, 2015, 5(12): 186-188. |

| [14] | ZHENG Z, WANG P, LIU W, et al. Distance-IoU loss: faster and better learning for bounding box regression[J]. Proceedings of the AAAI Conference on Artificial Intelligence, 2020, 34(7): 12993-13000. |

| [15] | TONG Z J, CHEN Y H, XU Z W, et al. Wise-IoU: bounding box regression loss with dynamic focusing mechanism[EB/OL]. (2023-01-24) [2025-02-10]. https://arxiv.org/abs/2301.10051. |

| [16] | WOO S, PARK J, LEE J Y, et al. CBAM: convolutional block attention module[C]//Computer Vision - ECCV 2018. Cham: Springer, 2018: 3-19. |

| [17] | HU J, SHEN L, ALBANIE S, et al. Squeeze-and-excitation networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020, 42(8): 2011-2023. |

| [18] | ELFWING S, UCHIBE E, DOYA K. Sigmoid-weighted linear units for neural network function approximation in reinforcement learning[EB/OL].(2017-02-10) [2025-02-10]. https://arxiv.org/abs/1702.03118. |

| [19] | ZHANG YF, REN WQ, ZHANG Z, et al. Focal and efficient IOU loss for accurate bounding box regression[J]. Neurocomputing, 2022, 506: 146-157. |

| [20] | GEVORGYAN Z, et al. Siou loss: more powerful learning for bounding box regression[EB/OL]. (2022-05-25) [2025-02-10]. https://arxiv.org/abs/2205.12740. |

| [21] | MA S L, YONG X. MPDIoU: a loss for efficient and accurate bounding box regression[EB/OL]. (2023-06-14) [2025-02-10]. https://arxiv.org/abs/2307.07662. |

| [22] | LIU W, ANGUELOV D, ERHAN D, et al. SSD: single shot MultiBox detector[C]//Computer Vision - ECCV 2016. Cham: Springer, 2016: 21-37. |

| [23] | REN S Q, HE K M, GIRSHICK R, et al. Faster R-CNN: towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(6): 1137-1149. |

| [24] | BOCHKOVSKIY A, WANG C Y, LIAO H Y M, et al. YOLOv4:optimal speed and accuracy of object detection[EB/OL].(2020-04-23) [2025-02-10]. https://arxiv.org/abs/2004.10934. |

| [25] | MALTA A, MENDES M, FARINHA T. Augmented reality maintenance assistant using YOLOv5[J]. Applied Sciences, 2021, 11(11): 4758. |

| [26] | WANG C Y, YEH I H, LIAO H Y M. YOLOv9: learning what you want to learn using programmable gradient information[C]//Computer Vision - ECCV 2024. Cham: Springer, 2025: 1-21. |

| [27] | TIAN Y J, YE Q X, DOERMANN D. YOLOv12:attention-centric real-time object detectors[EB/OL].[2025-02-10]. https://arxiv.org/abs/2502.12524. |

| [1] | 吕胤春, 段恩泽, 朱一星, 郑霞, 柏宗春. 基于YOLOv8-Swin Transformer模型的翻覆肉鸭实时检测[J]. 浙江农业学报, 2025, 37(7): 1556-1566. |

| [2] | 李萌民, 刘朔, 欧阳宇, 张鹏. 基于YOLOv8n的高效轻量化柑橘叶片病害检测模型[J]. 浙江农业学报, 2025, 37(10): 2198-2208. |

| [3] | 郭秀明, 王大伟, 刘升平, 诸叶平, 刘晓辉, 林克剑, 王佳宇, 李非. 基于深度学习的近地面草原鼠洞识别计数关键问题研究与应用[J]. 浙江农业学报, 2024, 36(9): 2146-2154. |

| [4] | 宁文楷, 李静, 沈晓东, 吴鑫, 李臻锋. 南瓜干燥过程中β-胡萝卜素的多源融合预测[J]. 浙江农业学报, 2023, 35(8): 1876-1887. |

| [5] | 闫宁, 张晗, 董宏图, 康凯, 罗斌. 基于透射光和反射光图像同位分割的小麦品种识别方法研究[J]. 浙江农业学报, 2022, 34(3): 590-598. |

| [6] | 包晓敏, 盛家文. 粘虫板害虫自动识别计数研究[J]. 浙江农业学报, 2019, 31(9): 1516-1522. |

| [7] | 伍渊远, 尚欣, 张呈彬, 谢新义. 自然光照下智能叶菜收获机作业参数的获取[J]. 浙江农业学报, 2017, 29(11): 1930-1937. |

| [8] | 田海韬, 赵军, 蒲富鹏. 马铃薯芽眼图像的分割与定位方法[J]. 浙江农业学报, 2016, 28(11): 1947-1953. |

| [9] | 任磊1,张俊2,*,陆胜民2. 脱囊衣橘片自动分拣机器视觉算法研究[J]. 浙江农业学报, 2015, 27(12): 2212-. |

| [10] | 刘建军;姚立健;彭樟林. 基于机器视觉的山核桃等级检测技术[J]. , 2010, 22(6): 854-858. |

| [11] | 梁琨;罗汉亚;沈明霞;*;何瑞银;张璐. 水稻播种质量检测技术的研究进展及展望[J]. , 2010, 22(2): 0-257. |

| [12] | 王勇;沈明霞;姬长英. 基于色差信息的田间成熟棉花识别[J]. , 2007, 19(5): 0-388. |

| 阅读次数 | ||||||

|

全文 |

|

|||||

|

摘要 |

|

|||||